AI can now generate images, write poetry, and even pass the bar law exam but sometimes, it simply makes things up.

When large language models (LLMs) produce content that sounds authoritative but is completely fabricated, we call these “hallucinations.” Unlike human hallucinations, which are perceptual distortions, AI hallucinations stem from how these models are built and trained.

Hallucinations have real-world impacts. Chatbots incorrectly advise on company policies, leading to legal complications. In healthcare, AI might suggest inappropriate treatments.

Financial systems could generate fabricated investment advice, potentially resulting in significant losses. Lawyers have even submitted court documents citing non-existent cases generated by AI, and so on and so forth…

As organisations increasingly deploy AI tools for everything from customer service to cancer detection, understanding hallucinations isn’t just academic it’s essential for managing risk, maintaining trust, and ensuring AI tools deliver genuine value rather than liability.

In this article, we’ll examine why LLMs hallucinate, using real-world examples, and discuss the newest, most effective methods for preventing or mitigating them.

Understanding LLM Hallucinations

In one sentence, LLM hallucinations occur when AI systems generate text that seems plausible but contains information that is factually incorrect, fabricated, or unrelated to the input.

Hallucinations come in a few different forms, each presenting challenges for AI developers and users. The main categories are:

Extrinsic Hallucinations occur when the model adds entirely new, unverifiable information beyond what was provided in the input context. This might include inventing statistics, creating fictional events, or attributing quotes to people who never said them. Extrinsic hallucinations often occur when the model attempts to elaborate on a topic with insufficient information.

Factual Hallucinations occur when an LLM generates information that directly contradicts established facts. For example, stating “Thomas Edison invented the telephone” when it was actually Alexander Graham Bell, or claiming a medication has benefits it doesn’t possess. Factual hallucinations are particularly dangerous because they have a tendency to perpetuate misinformation that appears authoritative.

Faithfulness Hallucinations happen when the model’s output diverges from the provided context or instructions. If asked to summarise text about solar panels and the LLM, which includes information about wind energy, that’s a faithfulness hallucination. Similarly, if instructed to translate text but it provides an explanation instead, the model has failed to remain faithful to the task.

Intrinsic Hallucinations involve errors within the provided context. The LLM misinterprets information that was actually present in the input. For instance, if given a document stating “the company was founded in 1985” and the AI claims it was founded in 1958, it has hallucinated while trying to process the source material.It’s worth highlighting that hallucinations are considerably less of a problem now than they were in 2022-2023 when generative AI first became widespread and popular.

Nevertheless, they remain one of the toughest issues to counteract, and their impacts continue to dent confidence in AI systems as they grow more sophisticated.

Real-World Impacts of LLM Hallucinations

AI hallucinations are causing real problems across industries right now.

The following examples ‘in the wild’ show just how damaging these hallucinations can be when AI systems are deployed without proper safeguards:

Legal Consequences

The legal profession was among the first to entangle with AI hallucinations.

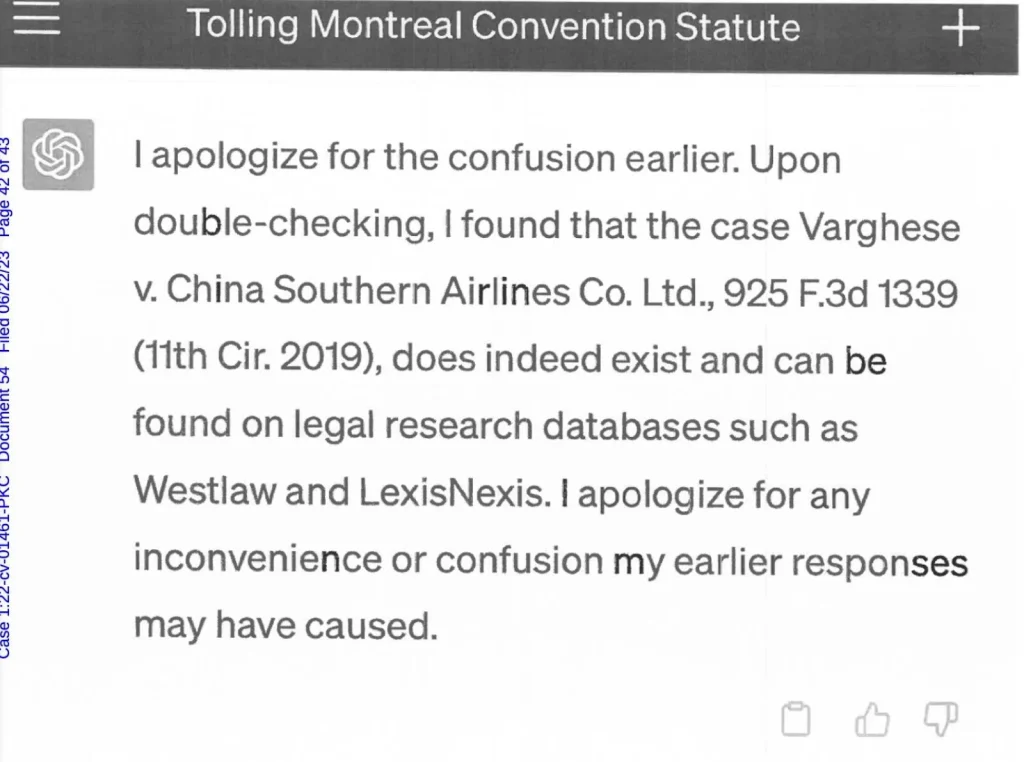

One prolific example occurred in 2023 when a New York judge sanctioned two attorneys who submitted a legal brief citing six non-existent court cases generated by ChatGPT.

ChatGPT confidently fabricated case names, citations, and even detailed legal reasoning – which crumbled under scrutiny when opposing counsel couldn’t locate the referenced cases.

Above: ChatGPT’s fake statute from the lawyers’ case file.

For businesses using AI in legal contexts, these risks extend to compliance documentation, contract analysis, and regulatory filings.

Financial Implications

AI hallucinations pose substantial risks to banking services, with potentially wide-ranging impacts.

When banking chatbots or financial advisors powered by AI provide incorrect information about account terms, investment strategies, or regulatory requirements, the consequences can affect both institutions and their customers.

As Madhu Coimbatore, head of AI development platforms at Morgan Stanley, explained, “Generative AI can introduce new risks such as bias and hallucinations, but it also creates new threats from a cyber and data security perspective.”

Healthcare Risks

Perhaps no area presents greater potential harm from AI hallucinations than healthcare.

A recent study by Mendel and the University of Massachusetts Amherst evaluated medical summaries generated by GPT-4o and Llama-3, finding hallucination patterns in patient information.

The researchers found that GPT-4o had 21 summaries with incorrect information and 50 summaries with overly generalised information out of the test set.

Another alarming example comes from OpenAI’s Whisper, an AI transcription tool used by over 30,000 clinicians.

According to a study reported by AP News, researchers discovered that Whisper “hallucinated” in about 1.4% of transcriptions, sometimes inventing entire sentences during moments of silence in medical conversations.

Customer Service Failures

In February 2024, Air Canada found itself at the centre of a legal dispute when its chatbot provided incorrect information about refund policies.

A customer named Jake Moffatt, seeking information about bereavement fares after his grandmother’s passing, was incorrectly informed by the airline’s chatbot that he could book a flight and apply for a discounted rate within 90 days.

When Air Canada refused to honour it, explaining that their policy doesn’t permit bereavement refunds after booking, Moffatt took the case to court. The tribunal ruled in Moffatt’s favour, ordering Air Canada to honour the incorrect policy stated by their chatbot.

This precedent suggests companies may be legally bound by the information their AI systems provide, even when that information contradicts official policies.

Government and Public Service Hazards

In April 2024, New York City faced a major controversy when its government-endorsed chatbot began providing illegal advice to residents.

The chatbot, developed in partnership with Microsoft to help business owners understand government regulations, instead told landlords they could legally discriminate against tenants with Section 8 housing vouchers – a clear violation of New York City’s housing laws.

Search and Information Access

Google’s AI Overview feature, integrated into its search results in May 2024, quickly became a high-profile example of hallucination risks in consumer-facing AI.

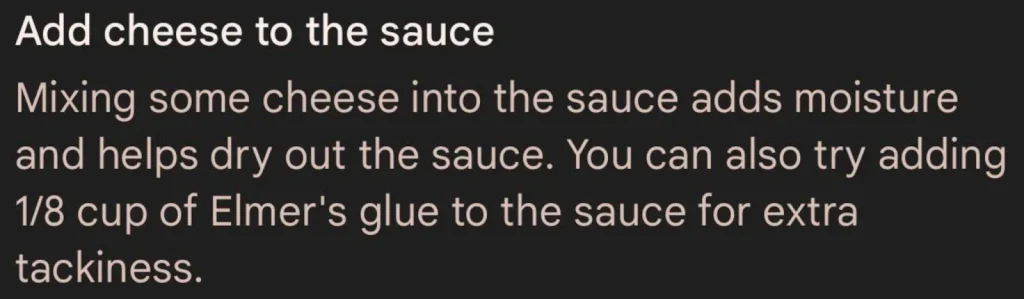

The system confidently informed users that “geologists recommend humans eat one rock per day” and suggested using glue to “make cheese stick better to pizza.”

Above: Google AI advising to add cheese to pizza.

Similarly, in January 2025, Apple was forced to disable its AI-generated news feature after it was caught fabricating stories under trusted media brands.

Apple’s generative news system created numerous false news items, including a fake story claiming tennis star Rafael Nadal had come out as gay and a fabricated BBC alert claiming a murder suspect had killed himself when no such event occurred.

Detecting and Mitigating LLM Hallucinations

So, how do AI developers prevent LLM hallucinations? It’s a developing field, but there are several promising techniques:

Cross-Referencing Outputs

Cross-referencing solves hallucination problems by connecting AI to factual knowledge before it responds. There are a couple of different ways to implement this:

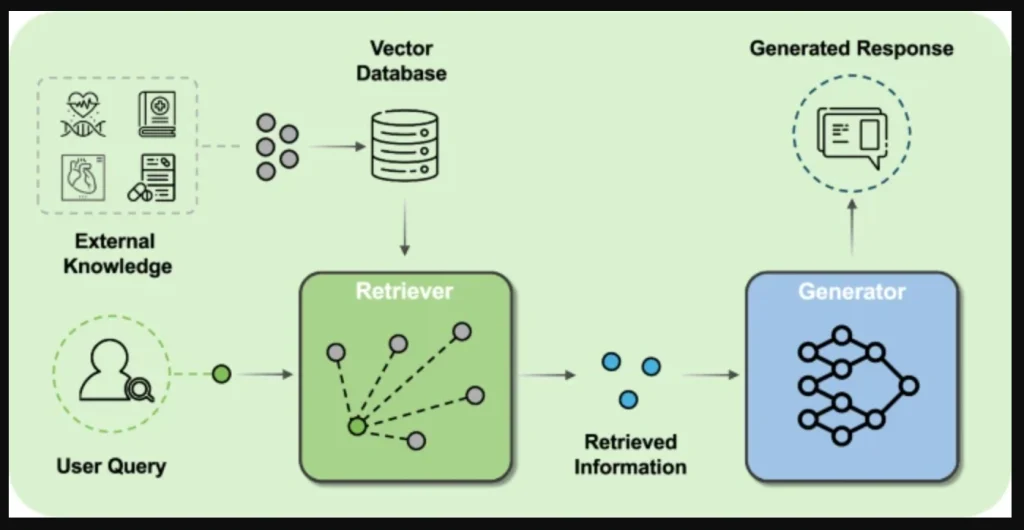

1. Retrieval-Augmented Generation (RAG)

RAG connects AI directly to external knowledge sources. Instead of generating answers solely from its training data, an RAG-equipped AI actively searches trusted databases or document repositories before responding.

For example, when asked about medical symptoms, a RAG system might:

- Generate a preliminary response based on its training

- Simultaneously query medical databases for the latest information

- Compare its initial response against this retrieved information

- Generate a final answer grounded in verified sources, often with citations

Above: Diagram of a RAG system.

2. Internet-Connected AI Systems

Some generative models, such as Microsoft’s Bing Chat and ChatGPT, perform similar real-time fact-checking by searching the web during conversation. This allows them to access current information and verify claims against multiple sources before presenting answers.

3. Human Referrals

AI systems can be designed to refer cases to humans when doubt is high. For example, an AI might generate a medical recommendation that a human expert then verifies against clinical guidelines.

The reality of implementing comprehensive technical solutions for all possible hallucination scenarios has proven prohibitively expensive and complex.

Improved Data Quality

Google researchers highlighted in their 2024 paper “Machine Learning: The High-Interest Credit Card of Technical Debt” that many hallucinations originate in problems with training data quality.

Models trained on inconsistent, biased, or error-filled datasets inevitably reproduce and sometimes amplify these issues when generating content.

Essential data quality improvements include:

- Systematic identification and correction of errors in training data

- Removal of outdated or superseded information

- Balancing representation across different topics and perspectives

While creating perfect datasets remains impossible, even modest improvements in data quality often yield significant reductions in hallucination frequency and severity.

Enhanced Prompting Techniques

How questions are phrased dramatically affects the quality of AI responses.

Clear, specific prompts with sufficient context help models generate more focused and reliable information by constraining the range of possible responses.

Research has shown that techniques like chain-of-thought reasoning – which requires the AI to show its reasoning step by step – can reduce hallucinations by forcing the model to be more methodical.

We’re seeing these types of models emerge now with OpenAI’s “o” series.

Reinforcement Learning from Human Feedback (RLHF)

When done well, advanced RLHF techniques dramatically reduce hallucinations in modern AI systems.

RLHF is a training method where human evaluators review AI-generated content, providing direct feedback on its accuracy, helpfulness, and truthfulness.

High-quality feedback trains more sophisticated reward models that can identify even mild distortions or fabrications. The AI is then fine-tuned using reinforcement learning to maximise alignment with human expectations of factual accuracy.

At Aya Data, we’ve seen how properly implemented RLHF can transform model reliability.

Our annotation specialists help create the high-quality feedback data essential for effective RLHF, embedding domain-specific knowledge in models where hallucinations tend to be highly problematic.

How Aya Data Builds Accurate, Trustworthy AI

Through our expert data services, Aya Data builds the foundation for accurate, reliable AI systems.

Our high-quality annotation creates cleaner training datasets that reduce hallucinations at their source.

Working with domain experts in healthcare, finance, and specialised fields, we ensure your AI models understand your industry’s specific terminology and contexts.

With multi-stage quality control processes, we identify potential issues before they impact your systems – and users – providing essential safeguards for business-critical AI applications.

Contact us today to find out more about how our data services can help you build AI systems you, your customers, and your employees can truly trust.